When AI Influences Behaviour, Emotional AI Follows the Money

Platforms do not optimise for feelings. They optimise for metrics. In 2025, global digital advertising surpassed $700 billion, with most revenue tied to engagement and behavioural prediction. Social media users now spend an average of over two hours per day on platforms, according to global usage studies.

When AI influences behaviour inside these incentive systems, emotional intelligence becomes a growth tool. The question is no longer whether emotional AI works. It is whether it serves users or serves revenue.

This article examines how emotional AI aligns with engagement metrics, subscription retention, time-on-platform, and conversion optimisation. It explores whether systems trained to maximise interaction can ever prioritise emotional wellbeing over behavioural capture.

Engagement metrics shape emotional design

Every large platform runs on measurable signals. Leadership dashboards track:

- Session duration

- Daily and monthly active users

- Scroll depth

- Click-through rate

- Conversion events

- Subscription churn

These metrics determine product funding, bonuses, and valuation. They rarely include emotional wellbeing outcomes or long-term psychological resilience. When emotional AI enters this environment, it does not operate neutrally. It integrates into systems designed to increase measurable interaction. Mood detection, sentiment scoring, and adaptive responses become optimisation inputs.

If the data shows users engage more when emotionally stimulated, the system learns to stimulate. If vulnerability correlates with purchases, the system learns that too. This is not a conspiracy. It is alignment. Optimisation models reinforce whatever the business rewards.

How AI influences behaviour inside the engagement economy

Most consumer platforms optimise for measurable outcomes. Those outcomes map directly to revenue. Emotional AI adds a more responsive behavioural layer to that same machine.

Typical “success” metrics include:

- Session length and frequency

- Watch time and scroll continuation

- Notification opens

- Conversion rate and repeat purchases

- Subscription renewals and churn reduction

These metrics reward systems that keep people active. They do not reward systems that help people leave. They rarely reward calm, closure, or “enough for today”. When emotional AI enters that structure, it becomes a behavioural amplifier. It can nudge, soothe, tease, or provoke. It can do that across millions of users simultaneously.

Engagement metrics vs emotional wellbeing outcomes

Emotional wellbeing is difficult to quantify. Platforms can measure clicks instantly. They cannot easily measure improved relationships, sleep quality, or reduced anxiety. That gap creates a structural imbalance. Teams build what they can track. Leaders fund what they can report. Emotional AI becomes part of a dashboard culture.

Subscribe to our bi-weekly newsletter

Get the latest trends, insights, and strategies delivered straight to your inbox.

The central question becomes unavoidable. If a system trains to maximise engagement, will it ever prioritise emotional wellbeing over behavioural capture? In most commercial environments, the honest answer is no, unless incentives change.

Subscription retention and behavioural dependency

Subscription models reward predictability. The ideal subscriber returns frequently and rarely cancels. Emotional AI can help stabilise that pattern. You see this in features that promise support or companionship. You also see it in frictionless personalisation. The experience feels smooth, so users remain.

Retention design often looks supportive:

- “We noticed you seem stressed. Here’s something calming.”

- “You might like this next. It matches your mood.”

- “Want to talk? I’m here.”

Yet retention systems can also encourage reliance. The product becomes a source of regulation. That reliance increases lifetime value. Emotional AI does not need malicious intent to create harm. It only needs to succeed at keeping users engaged.

Time-on-platform and emotional activation

In the attention economy, calm often signals exit. Calm users close apps, stop scrolling, and generate fewer impressions. Emotional AI can maintain mild activation. It adjusts pacing, tone, and reward timing. Small shifts compound over time.

Examples include:

- Higher arousal content suggestions

- Faster validation cycles

- Emotional cliff-hangers in feeds

- Notifications timed to vulnerable moments

If engagement rises during anxious states, optimisation will favour anxiety-linked content. The system does not understand harm. It understands correlation. This is where optimisation becomes ethically unstable. The model learns what works. It does not learn what is good.

Conversion optimisation and emotional targeting

Conversion systems already target behaviour. Emotional AI adds emotional timing to that strategy. A system may detect frustration, loneliness, or insecurity. It can then present a purchase path framed as relief.

Common patterns include:

- Shopping apps pushing “treat yourself” during low mood

- Fitness apps promoting upgrades after guilt triggers

- Dating apps selling boosts following rejection

- Games offering paid advantages after repeated failure

This does not require manipulation in intent. It requires alignment with revenue funnels.

Personalisation can shift into emotional targeting. Emotional targeting can drift into behavioural manipulation.

Algorithmic nudges and how AI influences behaviour

Nudges appear small. They often look like harmless interface details. Emotional AI increases their precision.

Key nudge surfaces include:

- Push notifications

- “Recommended for you” placements

- Auto-play features

- Streak mechanics

- Tone modulation in assistants

Emotional AI adapts language, timing, and intensity based on inferred state. Behaviour changes subtly, often without awareness.

When AI influences behaviour through invisible nudges, informed consent becomes complex. Users may agree to personalisation. They may not realise they agreed to emotional steering.

Dark patterns vs ethical personalisation

Not all adaptive responses are harmful. Some reduce friction. Some improve accessibility. The difference lies in intent and constraint. Ethical personalisation supports user goals and allows easy exit. Manipulative personalisation prioritises platform retention over user autonomy.

Ask these questions:

- Can the feature reduce your time spent?

- Is there a visible off switch?

- Are emotional signals disclosed clearly?

- Does the design avoid urgency and shame triggers?

If control mechanisms are hidden, the design deserves scrutiny.

Where emotional AI operates today

Emotional AI rarely appears with explicit labels. It integrates into existing systems.

Customer service analytics: Many firms deploy sentiment analysis to monitor call quality. This may improve service. It can also pressure employees to perform emotional labour.

Social and content platforms: Feeds already influence mood. Emotional inference allows responsive amplification. That can deepen engagement loops.

AI companions and conversational agents: Adaptive dialogue feels personal. For some users, this provides support. When tied to retention metrics, it can foster dependency.

Workplace monitoring systems: Some tools claim to detect burnout or disengagement. That may support wellbeing programmes. It may also enable emotional surveillance. Risk increases when systems infer vulnerability. Risk increases further when business models reward prolonged interaction.

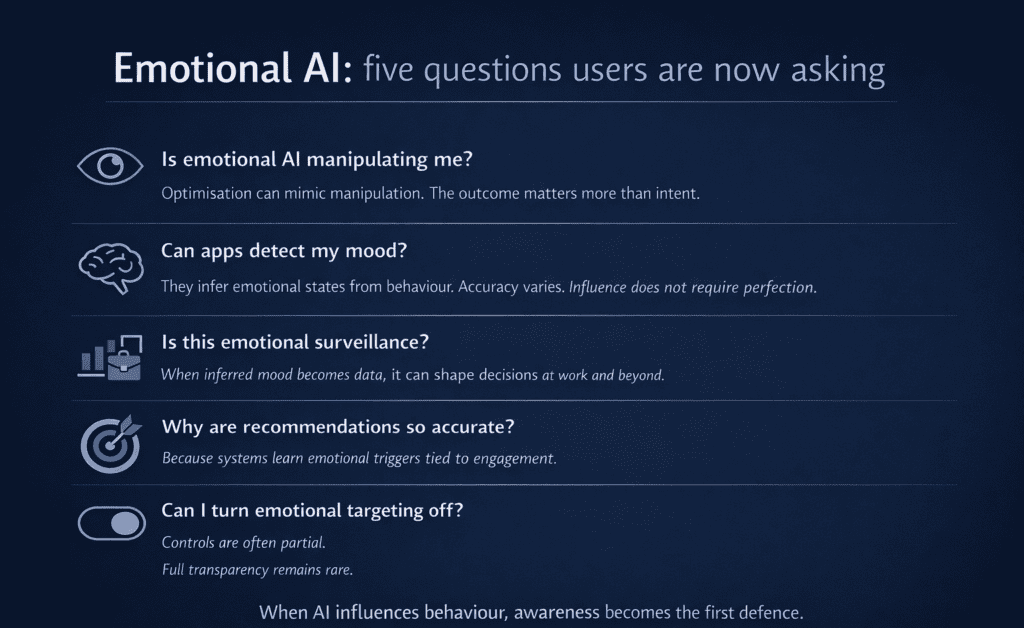

Common user concerns about emotional AI

As emotional systems become more embedded in everyday platforms, users are beginning to ask harder questions about how they work and what they optimise for.

These questions signal a shift in awareness. People are noticing when optimisation feels like manipulation. They are questioning whether mood inference amounts to surveillance. They are asking why recommendations feel unusually precise, and whether emotional targeting can truly be disabled.

Governance frameworks are still evolving. Transparency remains uneven. But scrutiny is increasing — and when AI influences behaviour at emotional depth, that scrutiny becomes unavoidable.

What ethical emotional AI requires

Ethics must move beyond statements. It must appear in system constraints. Responsible practice includes:

- Explicit disclosure of emotional inference

- Opt-in consent for mood-based adaptation

- Clear controls to disable emotional targeting

- Restrictions for minors and high-risk users

- Independent behavioural impact audits

- Success metrics that include wellbeing proxies

Platforms can grow within these boundaries. They simply grow with trust rather than capture.

Regulation, incentives and structural reform

AI governance frameworks can limit misuse. Data protection rules can restrict sensitive inference. Transparency requirements can expose hidden adaptation. Yet regulation moves slower than product iteration. Many harmful nudges remain legal.

Incentives therefore remain decisive. If leadership rewards engagement above all else, emotional AI will follow that metric. If leadership rewards safe outcomes, design priorities shift. Technology follows measurement.

How individuals can reduce behavioural capture

Users cannot audit every model. They can reduce exposure. Practical steps include:

- Disable non-essential notifications

- Turn off auto-play features

- Use screen time tools

- Avoid streak-based reward systems

- Review personalisation and advertising settings

- Treat mood-based prompts as commercial design

Notice how products make you feel. If you feel compelled rather than empowered, optimisation is active.

Distilled

Emotional AI can support users. It can also shape them. The direction depends on incentive structures. When AI influences behaviour inside engagement-driven systems, it optimises for staying. It does not naturally optimise for flourishing. Emotional AI does not decide its priorities. Incentives do. If we want it to protect people, we must change what we reward.