AI Audit: Accountability and Oversight in Enterprise AI

Artificial intelligence now makes decisions that directly affect customers, employees, and revenue. As AI moves deeper into enterprise operations, AI audit has become the primary tool organisations use to demonstrate control, accountability, and trust. The difficulty is that most enterprise AI systems change continuously, while traditional audit models assume stability.

So, what AI audits involve in practice, why snapshot audits struggle in live environments, and how enterprises are adapting governance to keep pace with changing systems?

Definition and practical scope of an AI audit

An AI audit is a structured review of how an AI system behaves in real operating conditions, not just how it was designed. It evaluates whether the system produces reliable, fair, and explainable outcomes, and whether those outcomes can be traced, challenged, and corrected.

In enterprise settings, an AI audit typically examines:

- Training and inference data sources, including updates over time

- Model versions and retraining schedules

- Decision logic, thresholds, and overrides

- Accuracy and error rates in production

- Bias across protected and operational groups

- Logging, traceability, and escalation paths

Crucially, an AI audit does not only ask whether a model works. It asks whether the organisation can reconstruct why a specific decision occurred weeks or months later.

Drivers pushing enterprises toward AI audits

AI audits are no longer driven solely by ethics statements. They are driven by concrete risk.

Regulatory and legal exposure

Regulations such as the Algorithmic Accountability Act and the EU AI Act require evidence of ongoing oversight. Enterprises must demonstrate that AI systems are monitored after deployment, not just approved beforehand. Regulators increasingly ask for audit trails, impact assessments, and proof of corrective action. A one-time audit rarely satisfies these requirements.

Operational failures in production AI

Many AI systems pass internal reviews but fail later due to drift. Hiring models trained on historical data degrade as job markets change. Fraud models over-flag legitimate customers after data distributions shift. Recommendation systems amplify unintended behaviour as feedback loops develop. These failures rarely appear in pre-deployment audits.

Reputational damage from AI bias scandals

AI bias scandals often emerge months after deployment, not at launch. Systems that appeared fair during testing later produce skewed outcomes once real users interact with them. Enterprises are learning that governance must extend beyond launch gates. This has made enterprise AI governance a standing operational concern rather than a policy exercise.

AI audit best practices that actually reduce risk

Effective AI audits focus on control points rather than documentation volume.

Practical AI audit best practices include:

- Named owners for each AI system and decision domain

- Versioned records of data, models, prompts, and thresholds

- Periodic AI bias audits using live production samples

- Continuous AI accuracy assessment against business outcomes

- Decision logs that capture inputs, outputs, and overrides

- Defined remediation paths when metrics cross risk thresholds

Enterprises that treat audits as living systems reduce surprise failures. Those who treat audits as reports accumulate hidden risk.

Types of AI audit used in enterprise environments

Most enterprises do not choose one type of AI audit. They accumulate them over time. That is usually where the confusion starts.

An AI ethics audit often appears first. It is the one discussed in steering committees and board decks. Teams use it to agree on principles like fairness and transparency before a system goes live. This works well for setting intent. It does very little once the system starts making real decisions.

Then comes the AI bias audit. This is where teams check whether outcomes look balanced across groups. For example, a hiring or lending model might appear fair during testing, then drift as new data enters the pipeline. Bias audits catch problems early, but they rarely age well.

Accuracy checks usually follow. An AI accuracy assessment looks at whether the system is still doing its job. A fraud model might begin with strong results, then slowly decline as behaviour changes. Accuracy audits highlight performance drops, but they do not explain who is affected or who is accountable.

Compliance audits arrive when regulation enters the picture. These reviews focus on documentation, approvals, and evidence. They help organisations defend decisions after the fact. They rarely change how systems behave day to day.

Only later do some enterprises move toward continuous AI audits. Instead of reviewing the system occasionally, they monitor it as it operates. Drift, bias, and accuracy changes automatically trigger attention. This approach reflects how AI actually behaves in production, but it requires engineering effort and a willingness to treat governance as ongoing work.

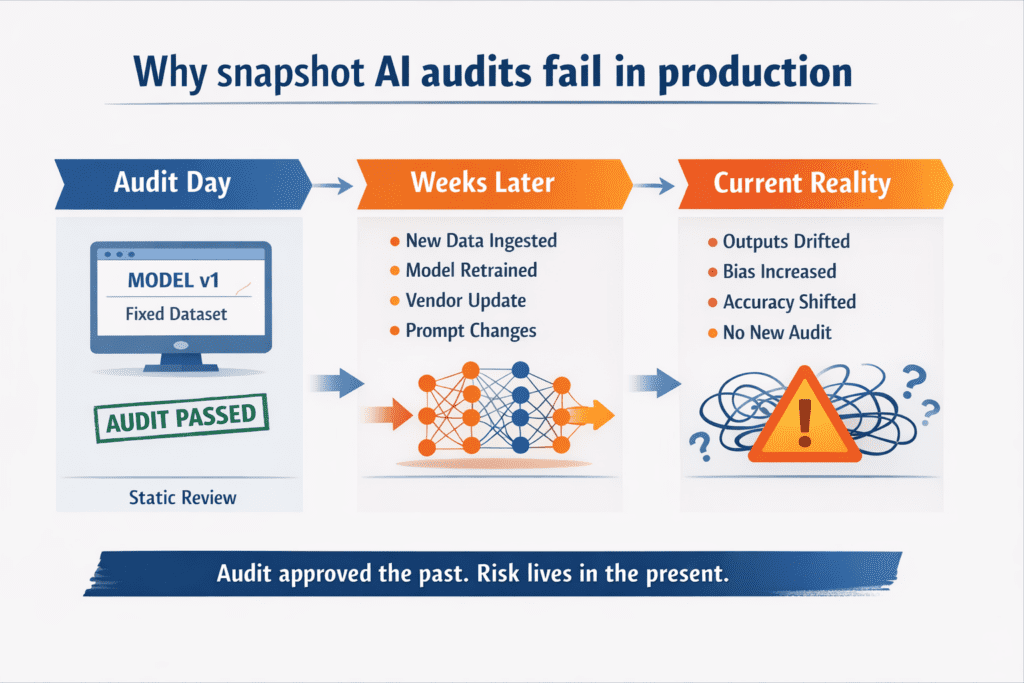

Snapshot audits and the mismatch with live AI systems

Snapshot AI audits evaluate systems at a single point in time. In enterprise environments, AI systems continue to change after deployment through retraining, new data, and external updates. This creates a growing gap between what was audited and what actually runs in production.

Snapshot audits approve a moment in the past, not ongoing behaviour. As models drift and inputs change, risk shifts into areas traditional audits never revisit.

What different AI audits are actually good for

No single AI audit covers every risk. Enterprises usually rely on several audit types, each designed to solve a specific problem. The key is understanding what each audit can realistically control — and where its limits begin.

- AI ethics audits: Useful for setting intent, values, and acceptable use. They guide direction but do not monitor live system behaviour.

- AI bias audits: Effective for identifying fairness issues at a specific point in time. They struggle to capture bias that emerges later through drift and retraining.

- AI accuracy assessments: Help validate performance and reliability in production. They support operational trust but do not address accountability or explainability.

- Compliance-focused AI audits: Designed to meet regulatory and legal requirements. They provide defensibility, not behavioural control.

- Continuous AI audits: Monitor system behaviour over time using live signals such as drift, bias, and accuracy thresholds. They require investment but enable real enterprise AI governance.

Distilled

AI audits are no longer about approval. They are about maintaining control over systems that continue to change after deployment. Enterprises operating AI at scale cannot rely on snapshot audits to govern models that retrain, adapt, and evolve continuously.

Effective AI audit programmes combine different audit types with continuous monitoring and clear ownership. When AI behaviour shifts every week, accountability cannot be periodic. It must exist every day. That is the standard modern enterprise AI governance must now meet.